The web is full of information! Your websites probably use APIs for maps, Twitter, IP geolocation, and more. But what about data that's on the web, but which doesn't have an API available?

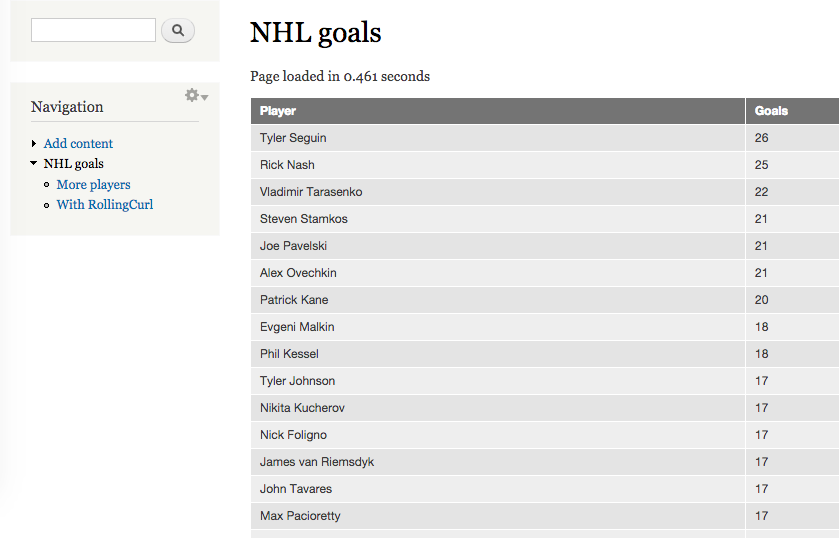

Suppose we wanted to display on our website a list of all the players in the NHL, and how many goals they've scored. The information we need is clearly on the web. But there's no public API that I can find.

Getting started

To fetch the data without an API, we'll use a technique called 'web scraping'. We'll just fetch the web page that contains our data, and then parse the data out of the resulting HTML. Here's a URL that has the top scorers. If we view the page's HTML source, we can see that the data we want is in a table with class "data". Each row represents one player, with the player's name in column one (counting from zero), and the number of goals scored in column five.

We'll use PHP's built-in DOMDocument to parse the HTML, and DOMXPath to locate things within the page:

// From raw HTML, return an array that maps players' names to goals

function nhl_goals_scrape($html) {

// Parse the HTML.

$doc = new DOMDocument();

@$doc->loadHTML($html);

// Find a tbody element that's within a table that has class "data".

$xpath = new DOMXPath($doc);

$tbody = $xpath->query('//table[contains(@class, "data")]/tbody')->item(0);

// Look at each row of the table.

$return = array();

foreach ($tbody->childNodes as $tr) {

// Pull out column 1 and column 5.

$name = $tr->childNodes->item(1)->textContent;

$goals = intval($tr->childNodes->item(5)->textContent);

$return[$name] = $goals;

}

return $return;

}

$html = file_get_contents('http://www.nhl.com/ice/playerstats.htm?fetchKey=20152ALLSASALL&viewName=goals&sort=goals&pg=1');

print_r(nhl_goals_scrape($html));We've got our data! It looks like this:

Array

(

[Tyler Seguin] => 26

[Rick Nash] => 25

[Vladimir Tarasenko] => 22

< snip 26 players >

[Zach Parise] => 14

)Scraping multiple pages

There's a problem, however—there's only thirty players on that page. What if we want more than that? It's clear that if we change the pg= part of the URL, we'll get a different page. To get more results, let's just fetch a whole bunch of pages:

// Get the URL for a page of NHL goal scorers.

function nhl_goals_url($page) {

return sprintf('http://www.nhl.com/ice/playerstats.htm?fetchKey=20152ALLSASALL&viewName=goals&sort=goals&pg=%s', $page);

}

function nhl_goals_many($pages) {

$return = array();

// Fetch URLs one at a time.

for ($i = 1; $i <= $pages; ++$i) {

$url = nhl_goals_url($i);

$html = file_get_contents($url);

$scraped = nhl_goals_scrape($html);

$return += $scraped;

}

return $return;

}

print_r(nhl_goals_many(10));Now we get even more output:

Array

(

[Tyler Seguin] => 26

[Rick Nash] => 25

[Vladimir Tarasenko] => 22

< snip many more players, 296 of them! >

[Alexander Edler] => 4

)However, this process was quite slow. It takes six seconds on my computer to fetch just ten pages!

Getting up to speed

The reason it's so slow is because we're getting the pages sequentially. Every time we fetch a page, we wait for the request to get to the nhl.com server, for the server to respond, and then for the response to get back–only then do we request the next page. It would be much faster if we could just send many requests at once, and then wait for them all to come back. You might think you can only do that with a language specifically designed for parallelism, like node.js. But it's easy to do in PHP as well!

We'll use the RollingCurl library. We can just download RollingCurl.php and Request.php into a nearby directory, so it's available to our code. Then we'll instantiate a RollingCurl object, and set a callback on it to save the response to each URL request. We'll request all the URLs at once, and when they're all done, we'll just merge all the responses together:

// Pull in RollingCurl

require_once 'include/Request.php';

require_once 'include/RollingCurl.php';

function nhl_goals_rolling($pages) {

$rolling = new \RollingCurl\RollingCurl();

// Create a list of URLs, and add each one to our RollingCurl.

$urls = array();

for ($i = 1; $i <= $pages; ++$i) {

$url = nhl_goals_url($i);

$urls[] = $url;

$rolling->get($url);

}

// Store the result for each URL, as responses come in.

$results = array();

$rolling->setCallback(function($req, $rolling) use (&$results) {

$html = $req->getResponseText();

$scraped = nhl_goals_scrape($html);

$results[$req->getUrl()] = $scraped;

});

// Run all the URL requests at once.

$rolling->execute();

// Collate results.

$return = array();

foreach ($urls as $url) {

$return = array_merge($return, $results[$url]);

}

return $return;

}

print_r(nhl_goals_rolling(10));We get the same output as before, but now it takes only two seconds—much better! You can apply this technique to any other paged web site that holds data you need. Just please be a good web citizen and respect the site you're scraping; don't hit it with too many requests.

Here's a complete Drupal module using this example, so you can try it out at home:

Other options

If you're interested in making fast web requests from PHP, here are some other options:

- The curl_multi_* functions are what RollingCurl uses internally. They work, but they're not so easy to use.

- PHP-multi-curl is another wrapper around curl_multi_*. It's similar to RollingCurl.

- The httprl module provides a parallel request API for Drupal. You might like this if you'll only be using your code in Drupal sites.

I personally favour RollingCurl, though. It has both an API and implementation that are simple and comprehensible. It also limits the number of requests that are in-flight at the same time, so you don't accidentally run a Denial-of-Service attack on the server you're hitting! Finally, it has a Request::setExtraInfo() function to associate arbitrary data with each request, which can help you keep track of all the different responses, even though they arrive out-of-order.

Which do you prefer?